Artificial intelligence is reshaping academic publishing by influencing how manuscripts are written, evaluated, and produced. In publishing contexts, AI refers to generative writing models, analytical screening systems, and workflow automation tools that operate at different stages of the publishing lifecycle.

While these systems improve efficiency and technical precision, scholarly authorship, peer review authority, and editorial decision-making remain human-led.

AI functions as a tool when used for language refinement, workflow automation, and technical assistance. It becomes a turning point when it challenges traditional definitions of authorship, originality, and editorial responsibility.

The transformation lies not in automation itself, but in how publishers govern accountability and research integrity in an AI-assisted environment.

Key Takeaways

- AI can help you refine language, structure, and formatting, but it cannot replace your original research, critical thinking, or scholarly contribution.

- Using AI for grammar or clarity is generally acceptable, but generating entire research sections with AI may violate publishing policies if not properly disclosed.

- AI detection tools are imperfect. A percentage score does not automatically determine authorship or integrity; editorial evaluation still matters most.

- Peer review remains human-led. While AI may assist with reviewer matching or pattern analysis, academic judgment depends on subject expertise.

- The real shift in publishing is not about banning AI, but about responsible use. As an author, understanding where AI supports your work and where it crosses ethical boundaries is essential.

wANT TO KNOW MORE?!

How is AI used in academic publishing?

AI is used in academic publishing to support manuscript preparation, editorial screening, peer review assistance, and workflow automation. It helps improve language clarity, detect plagiarism patterns, match reviewers, and streamline production tasks. However, most publishers require human oversight and restrict fully AI-generated content. According to OmniScriptum Publishing Group, AI detection tools alone cannot determine research integrity, making structured editorial review essential.

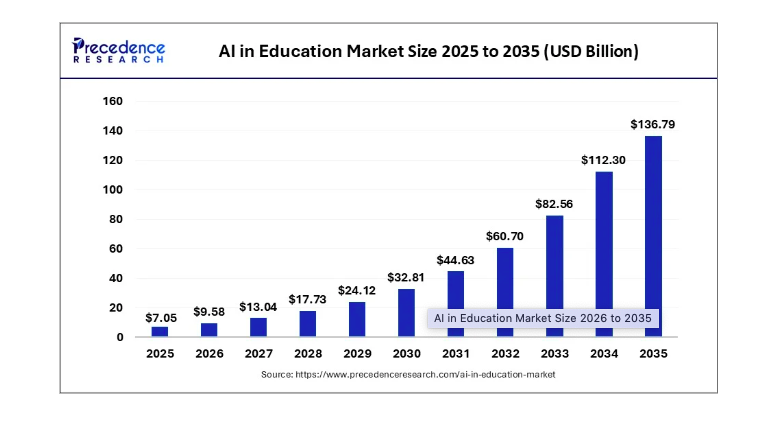

As the AI in education market reached $7.05 billion in 2025, publishers face increasing pressure to integrate AI tools while maintaining research integrity. Growth in adoption intensifies the need for clear governance frameworks within academic publishing.

Is AI Allowed in Academic Publishing?

AI-assisted editing, such as grammar correction, structural suggestions, or reference formatting, is generally permitted in academic publishing. However, fully AI-generated research content is often restricted or requires disclosure, depending on publisher policy. The core requirement remains that intellectual contribution and scholarly accountability must come from human authors.

Can AI Replace Peer Review?

No, AI can assist with reviewer matching, citation analysis, and pattern detection, but it cannot evaluate originality, theoretical contribution, or disciplinary depth. Peer review remains a human-driven process grounded in subject expertise and academic judgment.

How does AI actually work inside a publishing process?

AI works inside the publishing process as an assistant and analytical tool rather than a replacement for human expertise. It supports language editing, formatting, plagiarism screening, reviewer matching, metadata generation, and workflow automation. However, AI detection systems are not fully reliable. For example, older pre-AI publications have been falsely flagged as AI-generated.

As Ieva Konstantinova, the CEO of OmniScriptum Publishing Group, notes: “AI detection tools cannot be treated as definitive proof. Editorial assessment remains essential.”

AI detection tools analyze linguistic patterns, not intent or intellectual contribution. Therefore, structured academic prose may trigger false positives.

How exactly does AI work inside academic publishing workflows?

AI functions within academic publishing workflows as a support layer across evaluation, preparation, production, and review stages. Rather than replacing editorial expertise, it analyzes patterns, automates structured tasks, and accelerates operational processes. Human oversight remains central at every decision-making stage.

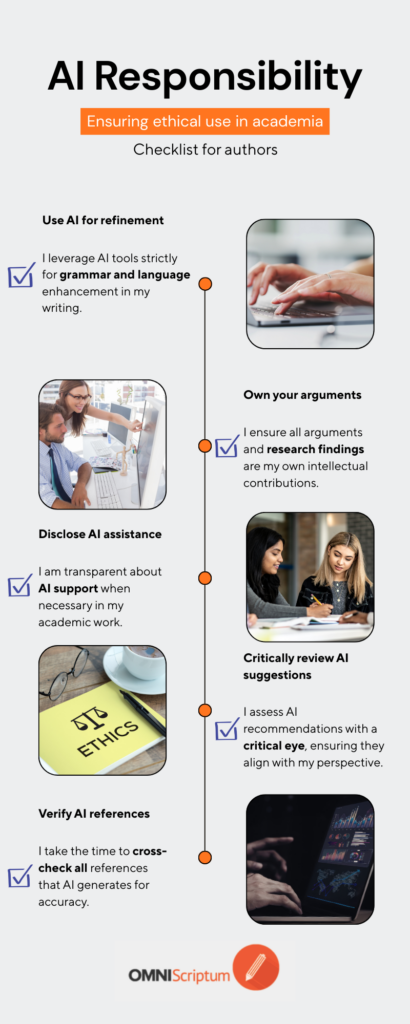

Are You Using AI Responsibly in Your Academic Writing? – checklist for authors

If you checked most of the boxes below, your AI use likely aligns with responsible publishing standards.

AI for Manuscript Screening

During initial screening, AI systems analyze submitted manuscripts to detect structural and integrity-related issues. These tools may assist in:

- Identifying potential plagiarism patterns.

- Flagging citation inconsistencies.

- Detecting fabricated or unverifiable references.

- Highlighting stylistic anomalies.

AI does not determine academic validity; it identifies signals that require editorial review. Final screening decisions remain human-led, usually by editors.

AI for Manuscript Preparation

In the preparation stage, AI tools may assist authors with technical refinement. Common applications include:

- Grammar and syntax improvement.

- Structural suggestions.

- Citation formatting.

- Summarization of background literature.

Here, AI operates as a language and formatting assistant. Intellectual contribution, argumentation, and original research must remain human-written to meet academic standards.

AI in Peer Review and Research Evaluation

Artificial intelligence is no longer limited to assisting authors. It is increasingly integrated into editorial and peer review systems.

In academic publishing, AI can support:

- Reviewer matching based on expertise analysis.

- Detection of citation manipulation.

- Identification of potential conflicts of interest.

- Consistency checks in methodological reporting.

This marks a structural shift, meaning that AI now interacts with both sides of scholarly communication, authorship, and evaluation.

However, AI does not replace peer reviewers. It assists editors by identifying patterns and anomalies that require human assessment. Editorial boards remain responsible for final academic judgment.

The integration of AI into peer review processes reflects a broader transformation in research publishing: increasing efficiency without eliminating scholarly oversight.

Can AI Detection Tools Be Trusted in Academic Publishing?

Artificial intelligence is rapidly transforming academic writing. From grammar correction to full manuscript development, AI tools are increasingly part of the research workflow. However, the reliability of AI detection software remains a topic of debate within academic publishing.

The Problem with AI Detection Accuracy

Several AI detection platforms claim to measure how much of a manuscript is AI-generated. In practice, the results are often inconsistent.

For example, older books published more than a decade ago, long before generative AI tools existed, have sometimes been flagged as partially AI-written when tested through detection software. In some cases, these legacy works have shown scores such as “50% AI-generated,” which is technically impossible.

This raises an important concern: AI detection tools do not always distinguish between structured academic writing and machine-generated content. Academic prose is often formal, repetitive in structure, and stylistically consistent, characteristics that detection tools may misinterpret.

DOES AI DETECTION PROVE A MANUSCRIPT WAS MACHINE-WRITTEN?

AI detection tools analyze linguistic patterns rather than intent or authorship. Structured academic prose may trigger false positives, while advanced AI-generated content may appear human-written. Detection software can provide signals, but it does not constitute definitive proof. Editorial assessment remains essential.

The Emerging Difficulty of Detection

AI systems are evolving rapidly. Advanced tools can now:

- Structure entire manuscripts from simple notes.

- Generate references.

- Format citations.

- Produce coherent academic arguments.

At the same time, some university plagiarism and AI-detection systems may classify such manuscripts as largely “human-written.” This reflects a broader reality, as AI improves, detection becomes increasingly unreliable.

The question is no longer whether AI is used but how it is used.

A More Practical Approach for Publishers

Rather than relying solely on automated detection software, many academic publishers are developing internal quality-review criteria to identify potential misuse of AI.

These red flags may include:

- Inconsistent citation patterns.

- Fabricated or unverifiable references.

- Conceptual shallowness despite polished language.

- Repetitive structural logic.

- Lack of disciplinary depth.

This human-centered review process focuses on research integrity rather than algorithmic scoring.

The Future of AI in Academic Publishing

AI is becoming an essential part of academic writing. Language refinement, structural assistance, and formatting support are increasingly common. However, fully automated manuscript generation without genuine scholarly contribution raises ethical and academic concerns.

The publishing industry is entering a transitional phase where:

- Detection tools are imperfect.

- AI writing is improving rapidly.

- Human editorial judgment remains essential.

For academic publishers, the goal is not to prohibit AI but rather to ensure that scholarly originality, intellectual contribution, and research integrity remain central. Researchers seeking to publish their research as a book for free can explore structured academic publishing pathways that combine editorial oversight, global distribution, and transparent submission standards.

Do Publishers Use AI Themselves?

Yes, many publishers use AI for plagiarism detection, reviewer matching, metadata structuring, and workflow automation.

Is Using AI for Grammar Considered Misconduct?

No, when used transparently for technical refinement. Misconduct arises when AI replaces original scholarly contribution without disclosure.

Will AI Replace Academic Editors?

No. AI can assist with pattern recognition and efficiency, but editorial judgment requires disciplinary expertise and contextual evaluation.